This blog is based on a CXO Insight Series conversation between Preston Wood and Aditya Sundararam on LinkedIn Live. Watch the full episode here.

In today’s cybersecurity landscape, it’s no longer enough to ingest more logs. CISOs face deeper, more systemic challenges as the foundational architecture of the modern enterprise SOC relies on antiquated SIEMs, siloed data landscapes, and brittle data pipelines which have reached their limit.

The main takeaway? If CISOs don’t rethink their approach to telemetry and pipelines, they’ll continue to fall behind. Not because they lack the tools, but because data strategies don’t work on a broken data foundation.

The Real CISO problem: Data Sprawl without Context

Preston opened the session by recounting a familiar story for many security leaders: SOCs using SIEMs that are drowning in irrelevant data, security analysts overwhelmed by a noisy tsunami of alerts, and struggling to investigate and manage their security posture effectively.

“You’ve got a 24/7 SOC and a dozen tools throwing off logs, but your team is still asking the same questions: what’s actually going on here?”

Preston

The issue isn’t visibility, it’s clarity. As Preston noted, enterprise SOCs don’t just suffer from managing volume; they suffer from lack of trust in their data. When logs are duplicated, out of order, lack context, and come in formats that were invented long after the tools meant to make sense of them, analysts spend more time normalizing and querying than detecting and responding.

SIEMs aren’t the Answer - they’re the Bottleneck

The session detailed serious limitations of the SIEM-centric model:

- Too rigid:

Legacy SIEMs demand proprietary formats and expensive tuning to onboard new sources - Too noisy:

SIEMs want to collect all your data for pattern analysis; but all they do is raise costs, and leave it to the SOC to figure out what matters - Too slow:

Detection happens after-the-fact, after data is shipped, indexed, and queried. - Too expensive:

License and compute costs scale linearly with ingestion, which has grown 1000x in the last 10 years; but SIEM effectiveness has not increased

“When your security ROI is gated by how many terabytes you can afford to ingest, you’re already behind.”

Preston

Preston argued that SIEMs still have a role - but not as the data movement engine. That role now belongs to something else: the security data pipeline.

Security Data Pipelines: Why they Matter

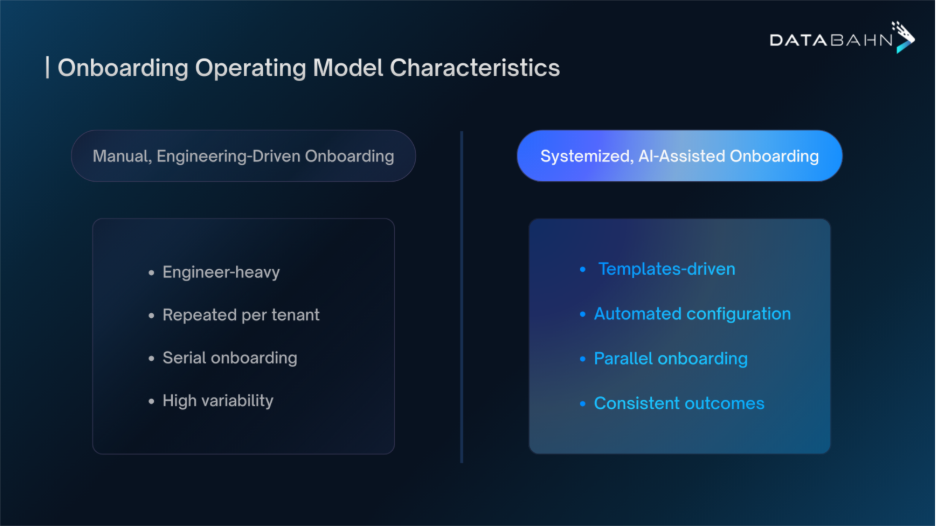

For security leaders wrestling with log sprawl, cloud complexity, and regulatory risk, the answer isn’t going to come from continuing to overload the SIEM; that has led to overwhelming telemetry sprawl, increasingly fragmented environment, and mounting telemetry pressure. To elevate enterprise SOC operations, security leaders should focus on treating the data pipeline as the part of their security architecture that can unlock the future for them.

The shift means rethinking how data flows through the stack. Instead of sending everything to the SIEM and dealing with the noise later, security teams should be routing and filtering telemetry at the edge, well before it even reaches their analytics tools. Enrichment can happen upstream as well, happening left-of-SIEM and ensuring contextual signals from the enterprise environment, and reducing dependency on external threat feeds. Normalization can occur just once as the data is collected and aggregated, ideally using open schemas like OCSF, so that data can be reused for different use cases – detection, investigation, and compliance. By placing intelligent data pipelines before the SIEM, teams can significantly cut egress, compute, and SIEM licensing costs while improving the signal-to-noise ratio.

Ultimately, this isn’t about adding a new tool into an already complex toolchain. It’s about building an intelligent and foundational data fabric layer which understands the environment, aligns with business and risk priorities, and prepares the organization for an AI-driven future. This is essential for SOCs looking to lean into AI use cases, because without AI-ready data, security tools leveraging AI are just window dressing.

AI-ready Security starts with Agentic Pipelines

Preston warned his fellow CISOs about how most security vendors are racing to bolt AI onto their dashboards to leverage the current hype cycle. True AI-driven security begins at the pipeline layer, which delivers structured, enriched, clean telemetry that is collected and governed in real-time. This is the input LLM or reasoning engines can build on for future SOC use cases.

DataBahn’s platform was purpose-built for this future: using Agentic AI to automate parsing, schema detection, enrichment, and routing. With products like Cruz and Reef operating as intelligent assistants embedded in the data plane - leveraging Agentic AI to learn, adopt, evolve, and grow into helping security teams - security decision makers can begin to empower their teams away from the manual drudgery of managing data movement and focus them on strategic goals. Agentic AI pipelines also create a foundational data layer to ensure that your AI-powered security tools are equipped with the data, context, policy, and understanding required to deliver value.

"Agentic AI doesn't start in the UI. It starts with the data fabric. It starts with being able to reason over telemetry that actually makes sense."

Preston

What CISOs should do now

The session closed with a call to action for CISOs navigating their next big data decision: whether that’s SIEM migration, XDR adoption, or cloud expansion.

- Rethink your architecture:

Stop treating your SIEM as the center; start with the pipeline. - Control your data before it controls you:

Invest in a governance-first pipeline layer that helps you decide what gets seen, stored, or suppressed. - Choose future-proof platforms:

Look for vendor-agnostic, AI-native solutions that decouple ingestion from analytics, and leverage agentic AI to let you evolve without replatforming.

The future belongs to organizations that control their telemetry, set up a streamlined data fabric, and prepare their stack for AI – not just in theory, and not for tomorrow, but put into practice today.

This blog is based on a CXO Insight Series conversation between Preston Wood and Aditya Sundararam on LinkedIn Live. Watch the full episode here.

.avif)

.avif)

.avif)

.avif)