Today’s SOCs don’t have a detection or an AI readiness problem. They have a data architecture problem. Enterprise today are generating terabytes of security telemetry daily, but most of it never meaningfully contributes to detection, investigation, or response. It is ingested late and with gaps, parsed poorly, queried manually and infrequently, and forgotten quickly. Meanwhile, detection coverage remains stubbornly low and response times remain painfully long – leaving enterprises vulnerable.

This becomes more pressing when you account for attackers using AI to find and leverage vulnerabilities. 41% of incidents now involve stolen credentials (Sophos, 2025), and once access is obtained, lateral movement can begin in as little as two minutes. Today’s security teams are ill-equipped and ill-prepared to respond to this challenge.

The industry’s response? Add AI. But most AI SOC initiatives are cosmetic. A conversational layer over the same ingestion-heavy and unreliable pipeline. Data is not structured or optimized for AI deployments. What SOCs need today is an architectural shift that restructures telemetry, reasoning, and action around enabling security teams to treat AI as the operating system and ensure that their output is designed to enable the human SOC teams to improve their security posture.

The Myth Most Teams Are Buying

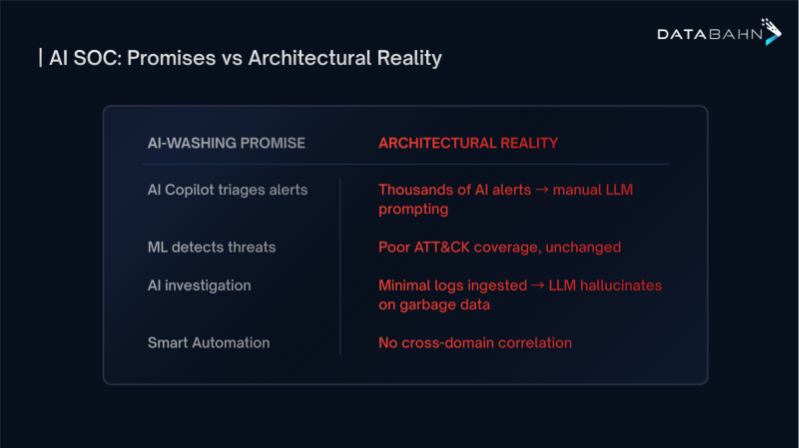

Most “AI SOC” initiatives follow a similar pattern. New intelligence is introduced at the surface of the system, while the underlying architecture remains intact. Sometimes this takes the form of conversational interfaces. Other times it shows up as automated triage, enrichment engines, or agent-based workflows layered onto existing SIEM infrastructure.

This ‘bolted-on’ AI interface only incrementally impacts the use, not the outcomes. What has not changed is the execution model. Detection is still constrained by the same indexes, the same static correlation logic, and the same alert-first workflows. Context is still assembled late, per incident, and largely by humans. Reasoning still begins after an alert has fired, not continuously as data flows through the environment.

This distinction matters because modern attacks do not unfold as isolated alerts. They span identity, cloud, SaaS, and endpoint domains, unfold over time, and exploit relationships that traditional SOC architectures do not model explicitly. When execution remains alert-driven and post-hoc, AI improvements only accelerate what happens after something is already detected.

In practice, this means the SOC gets better explanations of the same alerts, not better detection. Coverage gaps persist. Blind spots remain. The system is still optimized for investigation, not for identifying attack paths as they emerge.

That gap between perception and reality looks like this:

Each gap above traces back to the same root cause: intelligence added at the surface, while telemetry, correlation, and reasoning remain constrained by legacy SOC architecture.

Why Most AI SOC Initiatives Fail

Across environments, the same failure modes appear repeatedly.

1. Data chaos collapses detection before it starts

Enterprises generate terabytes of telemetry daily, but cost and normalization complexity force selective ingestion. Cloud, SaaS, and identity logs are often sampled or excluded entirely. When attackers operate primarily in these planes, detection gaps are baked in by design. Downstream AI cannot recover coverage that was never ingested.

2. Single-mode retrieval cannot surface modern attack paths

Traditional SIEMs rely on exact-match queries over indexed fields. This model cannot detect behavioral anomalies, privilege escalation chains, or multi-stage attacks spanning identity, cloud, and SaaS systems. Effective detection requires sparse search, semantic similarity, and relationship traversal. Most SOC architectures support only one.

3. Autonomous agents without governance introduce new risk

Agents capable of querying systems and triggering actions will eventually make incorrect inferences. Without evidence grounding, confidence thresholds, scoped tool access, and auditability, autonomy becomes operational risk. Governance is not optional infrastructure; it is required for safe automation.

4. Identity remains a blind spot in cloud-first environments

Despite being the primary attack surface, identity telemetry is often treated as enrichment rather than a first-class signal. OAuth abuse, service principals, MFA bypass, and cross-tenant privilege escalation rarely trigger traditional endpoint or network detections. Without identity-specific analysis, modern attacks blend in as legitimate access.

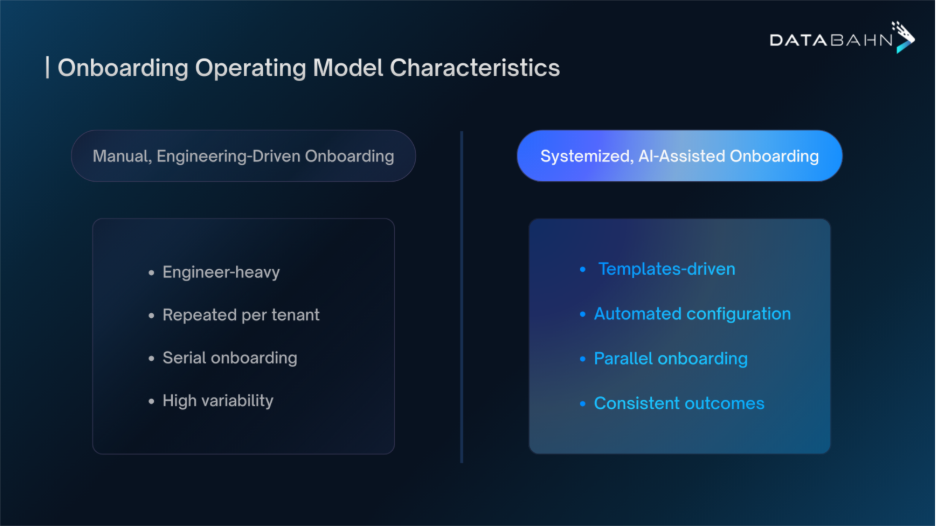

5. Detection engineering does not scale manually

Most environments already process enough telemetry to support far higher ATT&CK coverage than they achieve today. The constraint is human effort. Writing, testing, and maintaining thousands of rules across hundreds of log types does not scale in dynamic cloud environments. Coverage gaps persist because the workload exceeds human capacity.

The Six Layers That Actually Work

A functional AI-native SOC is not assembled from features. It is built as an integrated system with clear dependency ordering.

Layer 1: Unified telemetry pipeline

Telemetry from cloud, SaaS, identity, endpoint, and network sources is collected once, normalized using open schemas, enriched with context, and governed in flight. Volume reduction and entity resolution happen before storage or analysis. This layer determines what the SOC can ever see.

Layer 2: Hybrid retrieval architecture

The system supports three retrieval modes simultaneously: sparse indexes for deterministic queries, vector search for behavioral similarity, and graph traversal for relationship analysis. This enables detection of patterns that exact-match search alone cannot surface.

Layer 3: AI reasoning fabric

Reasoning applies temporal analysis, evidence grounding, and confidence scoring to retrieved data. Every conclusion is traceable to specific telemetry. This constrains hallucination and makes AI output operationally usable.

Layer 4: Multi-agent system

Domain-specialized agents operate across identity, cloud, SaaS, endpoint, detection engineering, incident response, and threat intelligence. Each agent investigates within its domain while sharing context across the system. Analysis occurs in parallel rather than through sequential handoffs.

Layer 5: Unified case memory

Context persists across investigations. Signals detected hours or days apart are automatically linked. Multi-stage attacks no longer rely on analysts remembering prior activity across tools and shifts.

Layer 6: Zero-trust governance

Policies constrain data access, reasoning scope, and permitted actions. Autonomous decisions are logged, auditable, and subject to approval based on impact. Autonomy exists, but never without control.

Miss any layer, or implement them out of order, and the system degrades quickly.

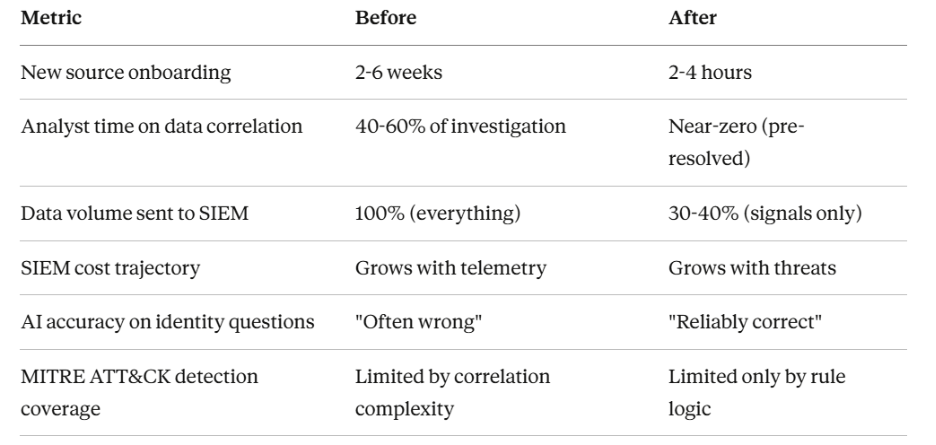

Outcomes When the Architecture Is Correct

When the six layers operate together, the impact is structural rather than cosmetic:

- Faster time to detection

Detection shifts from alert-triggered investigation to continuous, machine-speed reasoning across telemetry streams. This is the only way to contend with adversaries operating on minute-level timelines.

- Improved analyst automation

L1 and L2 workflows can be substantially automated, as agents handle triage, enrichment, correlation, and evidence gathering. Analysts spend more time validating conclusions and shaping detection logic, less time stitching data together.

- Broader and more consistent ATT&CK coverage

Detection engineering moves from manual rule authoring to agent-assisted mapping of telemetry against ATT&CK techniques, highlighting gaps and proposing new detections as environments change.

- Lower false-positive burden

Evidence grounding, confidence scoring, and cross-domain correlation reduce alert volume without suppressing signal, improving analyst trust in what reaches them.

The shift from reactive triage to proactive threat discovery becomes possible only when architectural bottlenecks like fragmented data, late context, and human-paced correlation, are removed from the system.

Stop Retrofitting AI Onto Broken Architecture

Most teams approach AI SOC transformation backward. They layer new intelligence onto existing SIEM workflows and expect better outcomes, without changing the architecture that constrains how detection, correlation, and response actually function.

The dependency chain is unforgiving. Without unified telemetry, detection operates on partial visibility. Without cross-domain correlation, attack paths remain fragmented. Without continuous reasoning, analysis begins only after alerts fire. And without governance, autonomy introduces risk rather than reducing it.

Agentic SOC architectures are expected to standardize across enterprises within the next one to two years (Omdia, 2025). The question is not whether SOCs become AI-native, but whether teams build deliberately from the foundation up — or spend the next three years patching broken architecture while attackers continue to exploit the same coverage gaps and response delays.

.png)

.avif)

.avif)

.avif)