Microsoft Sentinel best practices

How SOCs can optimize Sentinel costs & performance

Enterprises and security teams are increasingly opting for Microsoft Sentinel for its comprehensive service stack, advanced threat intelligence, and automation capabilities, which facilitate faster investigations.

However, security teams are often caught off guard by the rapid escalation of data ingestion costs with Sentinel. As organizations scale their usage of Sentinel, the volume of data they ingest increases exponentially. This surge in data volume results in higher licensing costs, adding to the financial burden for enterprises. Beyond the cost implications, this data overload complicates threat identification and response, often resulting in delayed detections or missed signals entirely. Security teams find themselves constantly struggling to filter noise, manage alert volumes, and maintain operational efficiency while working to extract meaningful insights from overwhelming data streams.

The Data Overload Problem for Microsoft Sentinel

One of Sentinel's biggest strengths is its ease of integrating Microsoft data sources. SIEM operators can connect Azure, Office, and other Microsoft sources to Sentinel with ease. However, the challenge emerges when integrating non-Microsoft sources, which requires creating custom integrations and managing data pipelines.

For Sentinel to provide comprehensive security coverage and effective threat detection, all relevant security data must be routed through the platform. This requires connecting various security data sources such as firewalls, EDR/XDR, and even business applications to Sentinel, resulting in a 4 to 8 week data engineering effort that SOCs have to absorb.

On the other hand, enterprises often stop sending firewall logs to Sentinel due to the increasing log volume and costs associated with unexpected data volume spikes, which also lead to frequent breaks and issues in the data pipelines.

Then vs. Now: Key to Faster Threat Detection

Traditional data classification methods struggle to keep pace with modern security challenges. Security teams often rely on predefined rules or manual processes to categorize and prioritize data. As volumes expand exponentially, these teams find themselves ill-equipped to handle large data ingestions, resulting in critical losses of real-time insights!

DataBahn aids Sentinel deployments by streamlining data collection and ingestion with over 400 plug-and-play connectors. The platform intelligently defines data routing between basic and analytics tables while deploying strategic staging locations to efficiently publish data from third-party products into your Sentinel environment. With DataBahn’s volume reduction functions like aggregation and suppression to convert noisy logs like network traffic into manageable insights that can be loaded into Sentinel, effectively reducing both data volume and the overall time for query execution.

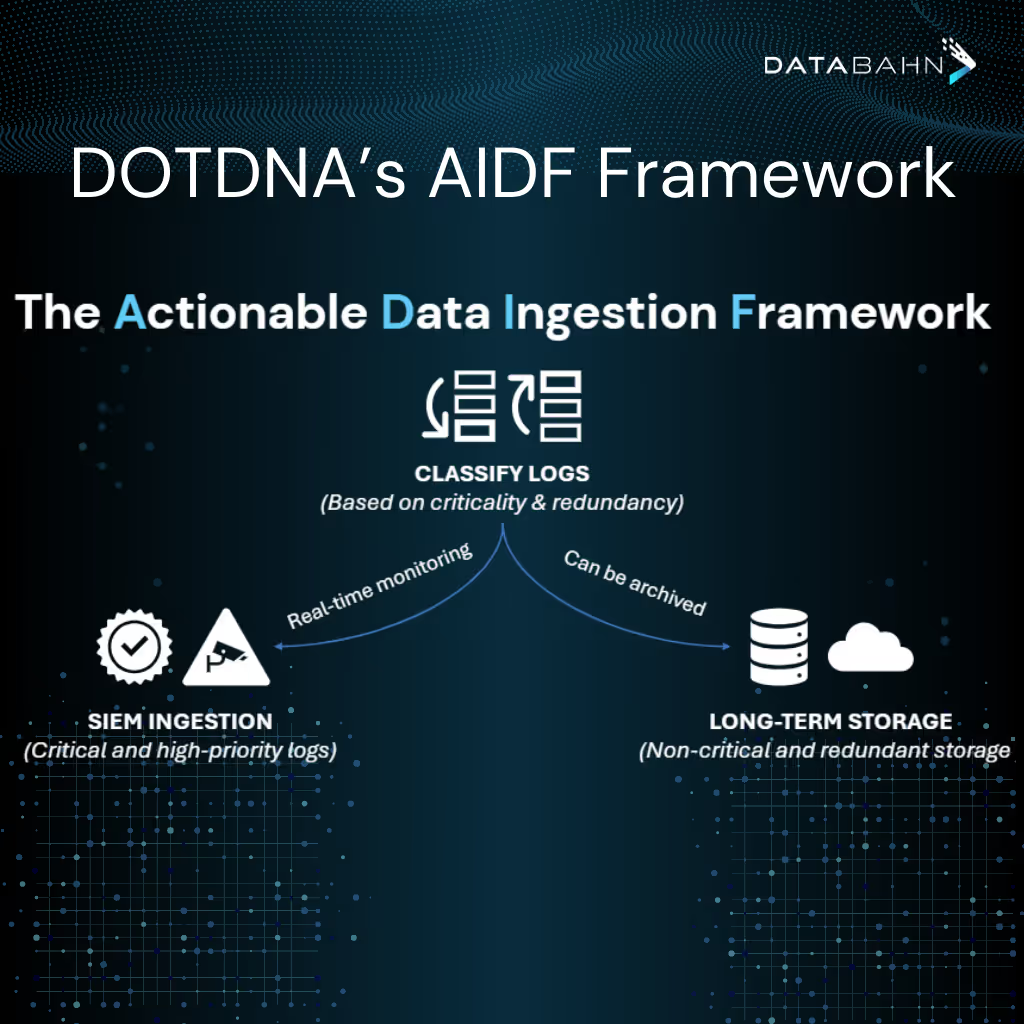

DOTDNA's AIDF Framework

DOTDNA has developed and promotes the Actionable Data Ingestion Framework (ADIF), designed to separate signal from noise by sorting your log data into two camps: critical, high-priority logs that are sent to Security Information and Event Management (SIEM) for real-time analysis and non-critical background data that can be stored long-term in cost-effectively storage.

The framework streamlines log ingestion processes, prioritizes truly critical security events, eliminates redundancy, and precisely aligns with your specific security use cases. This targeted approach ensures your CyberOps team remains focused on high-priority, actionable data, enabling enhanced threat detection and more efficient response. The result is improved operational efficiency and significant cost savings. The framework guarantees that only actionable information is processed, facilitating faster investigations and better resource allocation.

The Real Impact

Following an acquisition, a UK-based enterprise needed to consolidate multiple SIEM and SOC providers into a single Sentinel instance while effectively managing data volumes and license costs. DOTDNA implemented DataBahn's Data Fabric to architect a solution that intelligently filters, optimizes, and dynamically tags and routes only security-relevant data to Sentinel, enabling the enterprise to substantially reduce its ingestion and data storage costs.

Optimizing Log Implementation via DOTDNA: Through the strategic implementation of this architecture, DOTDNA created a targeted solution that prioritizes genuine security signals before routing to Sentinel. This precision approach reduced the firm's ingestion and data storage costs by $230,000 annually while maintaining comprehensive security visibility across all systems.

Reduced Sentinel Ingestion Costs via DataBahn’s Data Fabric: The DataBahn Data Fabric Solution precisely orchestrates data flows, extracting meaningful security insights and delivering only relevant information to your Sentinel SIEM. This strategic filtering achieves a significant reduction in data volume without compromising security visibility, maximizing both your security posture and ROI.

Conclusion

As data volumes exponentially grow, DataBahn's Data Fabric empowers security teams to shift from reactive firefighting to proactive threat hunting. Without a modern data classification framework like ADIF, security teams risk feeling overwhelmed by irrelevant data, potentially leading to missed threats and delayed responses. Take control of your security data today with a strategic approach that prioritizes actionable intelligence. By implementing a solution that delivers only the most relevant data to your security tools, transform your security operations from data overload to precision threat detection—because effective security isn't about more data, it's about the right data.

This post is based on a conversation between Davide, Founder of DOTDNA with Databahn's CPO, Aditya Sundararam. You can view this conversation on LinkedIn here.

.avif)

.png)

.png)

.png)

.avif)

.avif)